New report comes as government convenes first tripartite meeting on AI regulation

Australia's patchwork of state and federal employment laws is leaving workers exposed as artificial intelligence embeds itself into daily working life at a pace regulators have struggled to match, according to researchers and legal experts calling for urgent national action.

A report from the John Curtin Research Centre, backed by the SDA union, has warned that without a coordinated federal response, the country risks allowing AI to become a tool for intensifying workloads, expanding surveillance and eroding job security — with no clear legal remedy for workers harmed in the process.

The warning comes as Workplace Relations Minister Amanda Rishworth convened the first meeting of a new tripartite AI forum on Wednesday, bringing together government, business groups and unions to examine how existing frameworks should respond to the technology's rapid adoption across Australian workplaces.

But the forum opened amid sharp disagreement, with unions pushing for immediate enforceable protections and employer groups urging caution against what they describe as premature intervention.

A legal maze with no map

For HR leaders and people managers, the practical challenge is acute. Workplace relations lawyer Shannon Chapman told the ABC the absence of any single overarching law governing AI at work left employers and employees in genuinely uncertain legal territory.

"If someone comes to me and asks for advice about implementing biometrics data scanners in the workplace, that's not necessarily a quick or easy answer," Chapman said. "It will be jurisdiction specific. It will depend on the type of data that's being gathered, how it's going to be stored, how it might be able to be used."

That complexity compounds when organisations operate across multiple states, each with different workplace surveillance laws. Federal anti-discrimination, human rights and Fair Work legislation also intersect in ways that remain untested in the context of AI-driven decision-making.

Read next: Why your AI strategy stalls without a culture shift first

Chapman argued that while consistency across jurisdictions would be welcome, introducing new AI-specific legislation also carried the risk of creating additional layers of compliance burden. Any new rights around job security or restrictions on monitoring, she said, would raise immediate questions about how they interacted with existing employer obligations.

For HR professionals designing AI policies or procurement processes right now, that ambiguity is not theoretical — it is a daily operational challenge.

Skills demanded, protections lagging

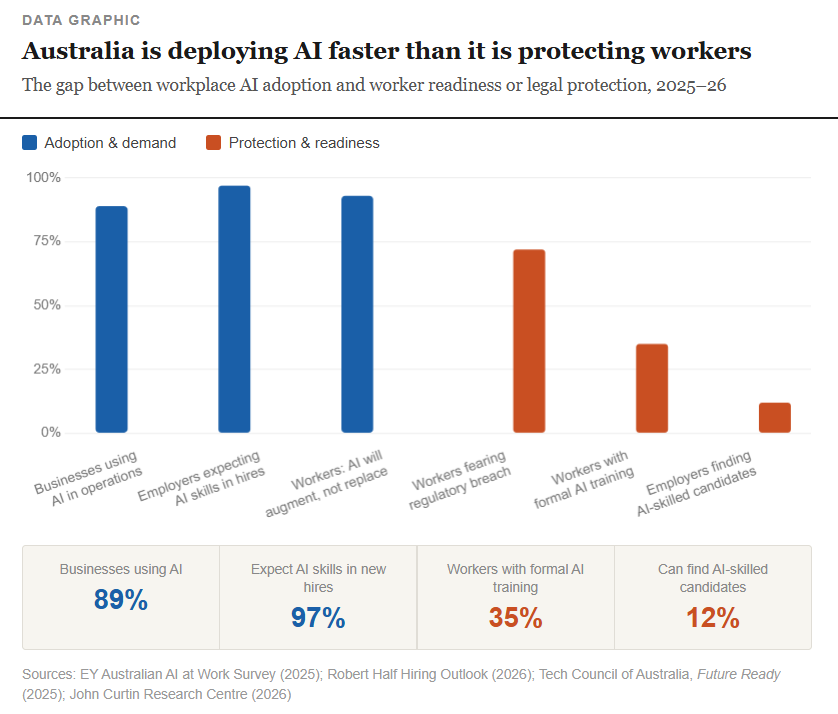

The regulatory gap is emerging precisely as AI becomes a baseline hiring expectation. Research from Robert Half found that 97% of Australian hiring managers now expect new employees to have AI and automation skills, yet 88% report difficulty finding candidates who can demonstrate them.

More than four in five workers surveyed believe generative AI proficiency is necessary for career advancement, while nearly nine in ten businesses are already using AI across at least one core function — finance, HR or technology.

The hiring picture is further complicated by AI's role in the recruitment process itself. More than a third of hiring managers say AI-generated applications make it harder to assess genuine candidate quality, prompting many organisations to add skills testing and more rigorous interview processes.

Yet as employers raise the bar on AI capability, the legal framework governing how AI is used to manage, monitor and assess those same workers has not kept pace.

Unions push back, business urges restraint

The ACTU and Australian Services Union are among the voices arguing that the window to shape sensible regulation is narrowing fast.

Australian Services Union national secretary Emeline Gaske told the AFRwrong this time her members in IT and administrative roles were already experiencing what she described as "AI-driven productivity demands, after-hours messaging, and the threat of digital surveillance."

"We do not agree that AI is not affecting jobs," Gaske said, pointing to thousands of job cuts across Australian technology firms. "The best time to regulate to make sure it's done in a fair way is before the horse has bolted."

Read next: AI tool used by ICE helps U.K. police uncover hundreds of fraudulent employees

Finance Sector Union national assistant secretary Nicole McPherson argued that without enforceable rules, voluntary principles would do little to change corporate behaviour. She claimed some organisations were deliberately obscuring the link between AI and job losses by outsourcing roles before deploying the technology — allowing them to argue no direct displacement had occurred.

The Business Council of Australia, however, backed Minister Rishworth's more cautious stance, with chief executive Bran Black pointing to the European Union and Canada as cautionary examples of jurisdictions where early regulation deterred investment.

"We've seen other countries taking this approach," Black told the AFR Workforce Summit. "Both are starting to roll back their position because they've realised they missed out on investments, they missed out on opportunities."

What the report recommends

The John Curtin Research Centre's recommendations are directed squarely at closing the governance gap before it widens further. The report calls for a national AI taskforce, a review of the Fair Work Act to address AI-related risks, and a requirement for mandatory human oversight wherever AI is deployed in workplace settings.

A central recommendation is the creation of an AI expert advisory panel within the Fair Work Commission, giving the tribunal specialist capacity to assess AI-related disputes and ensure existing protections remain meaningful in practice.

The report also calls for mandatory consultation with workers and unions before AI tools are introduced, and for universal access to AI education and upskilling — a point echoed by the ASU's call for a large government-funded training program.

Report co-author Dominic Meagher said the scale of disruption ahead demanded a response commensurate with the challenge.

"AI is so much more powerful than social media," Meagher said to the ABC. "We do not have the luxury of getting it wrong this time."

He was emphatic that the report was not anti-technology, noting that companies integrating AI collaboratively with their workforces were seeing genuine productivity and profitability benefits. "Just because AI makes a decision, it doesn't mean that it's an excuse for the company to sidestep their obligations," he said.

What HR leaders should do now

While the policy debate plays out, legal and technology experts are urging people managers not to wait for legislation before putting internal frameworks in place.

Chapman said the starting point for any organisation was ensuring employment contracts, workplace policies and training programs clearly addressed AI use — including what was and was not permissible, and what the consequences of a breach would be.

"Do you have a policy about AI use? What does it say? Does it clearly tell your employees what the consequences are if they breach that? Have you trained your employees on it?" she said.

Read next: It's not just an AI strategy - you need a talent strategy too: Aon CEO

Digital forensics expert Matt O'Kane cautioned that many AI monitoring tools entering the Australian market were developed in jurisdictions with markedly different expectations around workplace privacy. Employers adopting international platforms needed to actively test whether those tools operated in ways consistent with Australian norms.

"Just because it's AI, it doesn't remove your personal responsibility," O'Kane said. "You need to test that AI to make sure it operates in your name in a way that you're happy with as an employer."

Minister Rishworth flagged that her immediate concern was not job displacement but work intensification — the risk that AI compresses human effort into unsustainable output demands rather than genuinely alleviating workload.

"I'm not 100% sure that the recent adoption has led to people sitting around twiddling their thumbs," she said. "My mind is more focused on making sure we don't have cognitive burnout."

Safe Work Australia is currently conducting an occupational health and safety review into AI-linked work intensification and psychosocial risk — a signal that even without new legislation, the regulatory machinery is beginning to turn.

For HR professionals, that review may be the most immediate compliance pressure on the horizon.